Data Centres in Space?

The idea might seem like something out of a science fiction story, but it's more real than you think!

At ReadOn, we don’t just report the markets. We help you understand what truly drives them, so your next decision isn’t just informed, it’s intelligent.

For years, “the cloud” was a metaphor. Something abstract, invisible, safely grounded on Earth. That metaphor now seems to be breaking.

Google disclosed work on Project Suncatcher, examining whether computing infrastructure could operate in orbit using near-continuous solar power. Starcloud, backed by Nvidia, already trained a language model from orbit in November 2025. SpaceX’s Elon Musk says his Starlink constellation will evolve into orbital data centres. Jeff Bezos predicts gigawatt facilities in space within two decades. Former Google CEO Eric Schmidt bought a rocket company specifically to put data centres in orbit.

But why are these companies even considering expanding in space? Is it even scalable and if so, wouldn’t that increase their costs?

Let’s find out!

Why are we even considering setting up data centres in space?

Today, constructing a data centre costs between $7 million and $11 million per megawatt of capacity, or roughly ₹60–100 crore per MW. Facilities designed for AI workloads, with dense GPU clusters, can exceed $20 million per megawatt. A standard 100-megawatt hyperscale campus therefore requires $900 million to $1.5 billion in upfront capital, before accounting for server hardware.

But construction is only the starting point.

Operational expenses have become the real pressure point. Maintenance alone absorbs close to 40% of ongoing costs, reflecting the complexity of running high-density hardware continuously. Electricity accounts for another 15–25%, depending on geography and cooling efficiency. The margin for error is thin. Even a relatively modest 30-megawatt facility must generate around $100 million in annual revenue to achieve a 10% internal rate of return. As computing density rises, that breakeven threshold keeps moving further out of reach.

AI workloads intensify this strain rather than merely adding volume. Training and deploying large language models now consumes electricity at industrial scale. A 2021 research paper by Google and UC Berkeley estimated that training GPT-3 required 1,287 megawatt-hours of electricity, producing over 550 tonnes of carbon dioxide. Crucially, this figure captures only the initial training run. Inference, fine-tuning, and repeated updates turn energy use into a permanent load. As AI shifts from single instances of training to continuous deployment, power demand grows massively instead of flattening.

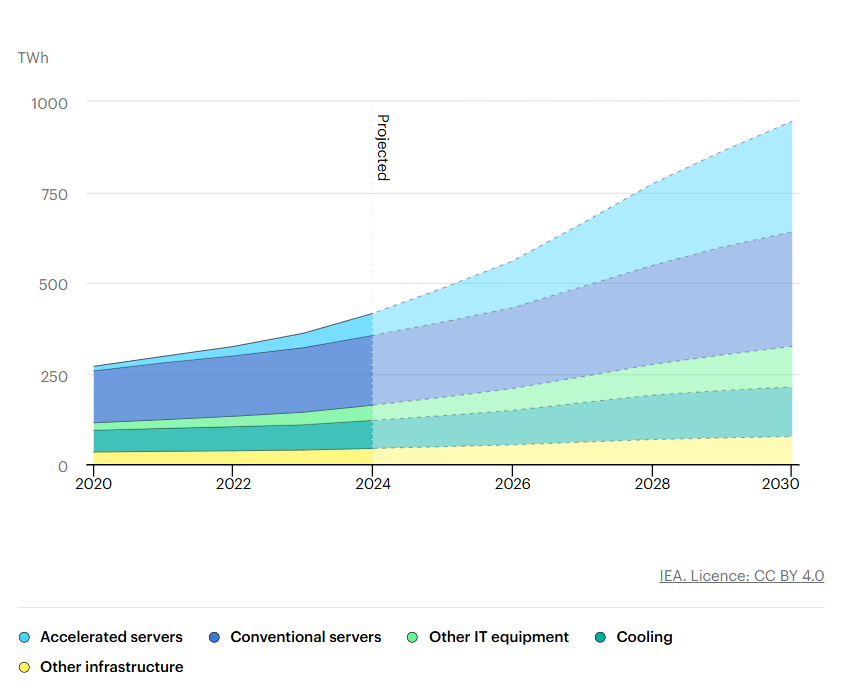

According to the International Energy Agency, data centres accounted for about 1.5% of global electricity consumption (around 415 TWh) in 2024, growing at roughly 12% per year over the last five years. With AI adoption accelerating, the IEA projects data centre power demand could double by 2030.

Critically, the bottleneck is not land scarcity in most markets, but power availability where land is already zoned for data centres. Developers increasingly talk about “powered land”, or parcels with confirmed access to sufficient grid capacity, as the real limiting factor for new builds. Projects with land and fiber still fail to move forward without reliable and large power connections. Land parcels in the US near substations, solar farms and hydroelectric facilities are commanding premium prices, often 2x to 4x what they were just 18 months ago.

Water usage mirrors electricity consumption.

Only 3% of global water is freshwater, and an even smaller portion is accessible and safe for human use. Large data centres can consume millions of gallons of water per day for cooling.

A data center’s water footprint is calculated as the sum of three categories: on-site water usage, water use by power plant facilities that supply power to data centers, and water consumption during the manufacturing process of processor chips. Data centers’ water usage depends on various factors, including location, climate, water availability, size, and IT rack chip densities. In hotter climates, like India, data centers need to use more water to cool the building and equipment. With the increasing number of centers supporting AI requests, chip density is also growing, which leads to higher room temperatures, necessitating the use of more water chillers at the server level to maintain cool temperatures. Most data centers use a combination of chillers and on-site cooling towers to avoid chip overheating.

Approximately 80% of the water (typically freshwater) withdrawn by data centers evaporates, with the remaining water discharged to municipal wastewater facilities.

Why Space Solves Problems Earth Can’t

The physics and economics of space are fundamentally different.

The physics are straightforward. Satellites placed in sun-synchronous orbits experience near-continuous sunlight, orbiting the Earth in a way that keeps them perpetually aligned with sunrise or sunset. Google’s Project Suncatcher is built around this logic, targeting orbital paths where solar arrays can harvest energy almost 24/7, free from clouds, atmospheric loss, or night cycles. In these conditions, solar panels generate energy 22 times cheaper in the sun-synchronous orbit. Due to the lack of atmosphere in the orbit, the capacity of solar panels gets amplified by 40%.

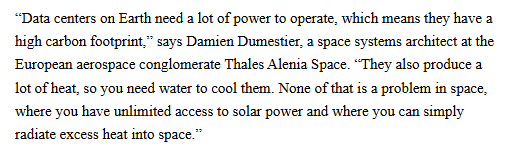

Cooling is where the advantage becomes decisive. As Nvidia founder Jensen Huang has noted, a two-ton GPU rack on Earth often requires nearly the same mass again in cooling infrastructure. In space, that constraint largely disappears. Projects like Thales Alenia Space’s ASCEND concept rely on passive radiators mounted on the shaded side of orbital platforms, allowing heat to be dissipated directly into the vacuum. There are no chillers, no HVAC systems, and no water consumption. The roughly 40% of energy overhead that terrestrial data centres devote to cooling can, in principle, be eliminated.

As Damien Dumestier of Thales Alenia Space explained to MIT Technology Review, data centres on Earth face a double bind. They consume vast amounts of electricity and generate enormous heat, which then requires water-intensive cooling. In orbit, solar power is continuous and excess heat can simply be radiated away, sidestepping both constraints simultaneously.

Connectivity, counterintuitively, may also improve. Optical laser links operating through vacuum transmit data faster than fibre-optic cables on Earth. Several space-compute proposals, including Starcloud’s orbital platform designs, assume that latency-sensitive workloads could benefit from direct space-to-space links before data is beamed back to ground stations. Positioned above coastal population clusters, where over half of humanity lives, orbital data centres could, in specific use cases, outperform congested terrestrial fibre routes.

Deployment speed offers another advantage. Microsoft’s underwater Project Natick demonstrated that factory-sealed computing modules could move from assembly to operation in under 105 days.

Orbital data centre concepts build on a similar philosophy. Google’s approach, described by Sundar Pichai as deploying “tiny racks” in space, emphasises modularity. Instead of committing billions upfront, operators could launch a handful of satellites, validate performance, and then scale incrementally to dozens or hundreds. There is no land acquisition, no environmental permitting, and no negotiation with local utilities before capacity goes live.

From a climate and economics perspective, this shift is significant. Thales Alenia Space estimates that its ASCEND roadmap, beginning with a 50-kilowatt proof of concept by 2031 and scaling to one gigawatt by 2050, could generate several billion euros in cumulative returns while materially reducing energy consumption and emissions. Startups such as Starcloud argue that fully solar-powered orbital facilities could deliver up to ten times lower carbon emissions than natural-gas-powered data centres on Earth.

The Reality Check: Where the Physics Push Back

For all its theoretical elegance, the case for space-based data centres collides quickly with hard physics and unforgiving economics.

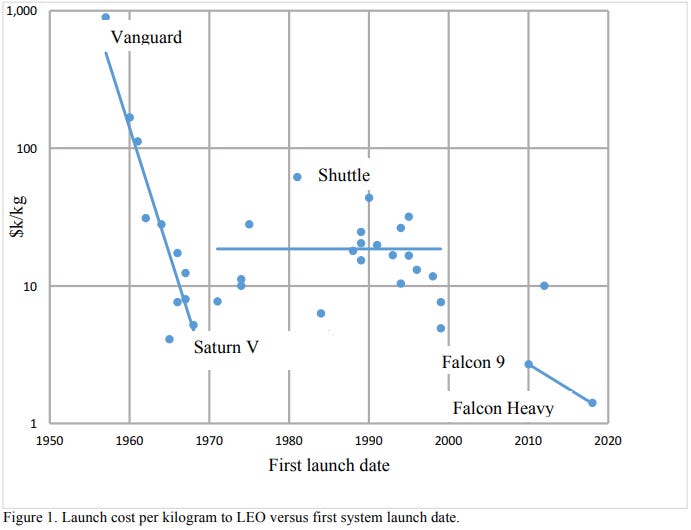

The most immediate barrier is launch cost. Even after a decade of progress driven by reusable rockets, sending payloads to orbit still costs around $1,400 per kilogram.

Internal estimates from Google’s Project Suncatcher suggest costs would need to fall below $200 per kilogram by the mid-2030s for orbital data centres to approach parity with terrestrial economics. That implies another seven-fold reduction from today’s already optimistic baseline. Early deployments remain expensive by any measure. Starcloud’s first satellite launch alone is estimated at millions, and scaling to multi-gigawatt capacity would require hundreds of billions of dollars in launch costs before accounting for hardware.

Hardware durability compounds the challenge. In orbit, semiconductors are continuously exposed to cosmic radiation, degrading performance over five to six years. On Earth, upgrades are incremental and continuous. In space, replacement means new launches. Research into Google’s TPUs shows that logic cores are relatively tolerant, but high-bandwidth memory degrades much faster. And that’s the most expensive component in modern AI systems. Radiation hardening helps, but at a cost. Heavier shielding, lower clock speeds, or specialised fabrication processes that erode the very performance gains that justify moving compute off-planet.

Cooling, often framed as the decisive advantage, also becomes more complex at scale. While vacuum allows heat to be radiated away, thermodynamics still applies. Solar panels absorb nearly all incoming radiation, convert only a fraction into electricity, and the rest becomes heat — as does all computation. Dissipating megawatt-class heat loads requires vast radiator surfaces, potentially tens of thousands of square metres per gigawatt. These structures add mass, deployment risk, and cost, diluting the simplicity of “free cooling.”

Maintenance may be the hardest constraint of all. Microsoft’s Project Natick offers a revealing parallel. Its underwater data centre experiment achieved eight times higher reliability than land-based facilities, yet Microsoft shut the program down. The reason was not performance but flexibility. Computing hardware evolves faster than infrastructure. Retrieving, upgrading, or repairing sealed systems proved too slow for an industry that reinvents itself every 18 months. Orbital systems face the same issue, magnified. As researchers note, fixing problems in orbit remains far from straightforward, even with robotics and automation.

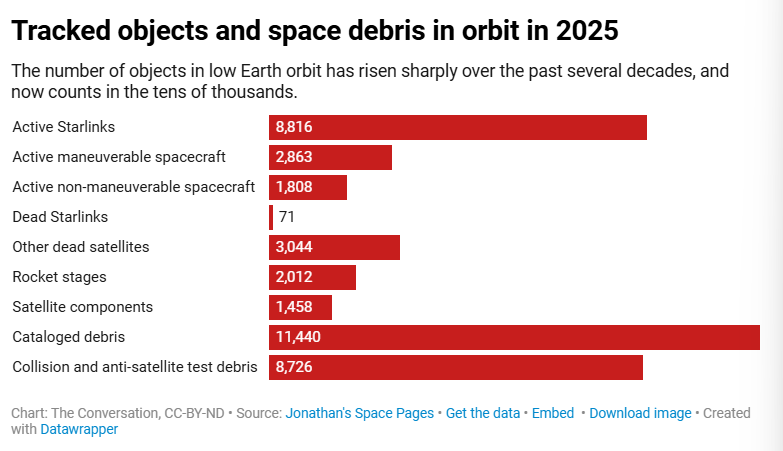

Orbital debris adds a systemic risk. The U.S. Space Force tracks roughly 40,000 objects larger than a softball, but millions of smaller fragments travel through orbit at lethal speeds.

In just the first half of 2025, SpaceX’s Starlink executed over 140,000 collision-avoidance manoeuvres. Any collision involving a data-centre-class satellite risks creating additional debris, pushing orbits closer to Kessler syndrome, where cascading impacts render entire orbital bands unusable. Scaling compute in space means scaling this risk as well.

Even the environmental case is unsettled. While orbital data centres eliminate local water use and grid emissions, lifecycle analyses tell a murkier story. Studies from Europe suggest that once rocket launches, manufacturing, and atmospheric re-entry are included, space-based facilities could generate significantly higher emissions than terrestrial equivalents. Much of this pollution occurs high in the atmosphere, where rocket exhaust can damage the ozone layer. For countries already balancing growth with environmental stress, this is not a clean trade-off.

The Bottom Line

Obstacles exist, yet investment continues.

Starcloud has raised capital from Y Combinator, Andreessen Horowitz, and Sequoia. Axiom Space plans orbital compute nodes by 2027. Thales Alenia Space has secured European Commission backing. In India, startups like TakeMe2Space are sketching long-term roadmaps for solar-powered orbital infrastructure. Early demonstrations work. Starcloud has already run AI inference in orbit and returned results to Earth.

This is what makes the question difficult. The issue is not technical feasibility. It is economic inevitability.

Global AI infrastructure spending is projected to exceed $6.7 trillion by 2030, even as terrestrial constraints like power, water, permitting, and grid capacity tighten. Two decades ago, deploying thousands of satellites for global internet access sounded implausible. Today, Starlink operates at that scale routinely.

Orbital data centres may follow a similar path. Or they may resemble Microsoft’s underwater experiment as a technically successful detour that reshapes terrestrial design rather than replacing it. For now, Google calls Project Suncatcher a moonshot: ambitious, demanding, and uncertain. Whether it becomes normal depends less on physics, which clearly works, and more on whether launch costs collapse, radiation-hard computing advances, debris risks are managed, and orbital economics ever outperform increasingly nuclear- and renewable-powered data centres on Earth. There’s still a long way to go before data centres reach outer space and stay there.

Until then, ReadOn.